Executive Summary:

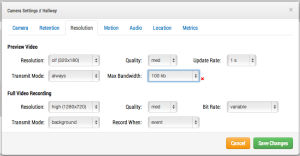

We recommend 100kbps per camera for our realtime previews stream. You can adjust the quality on those by raising or lowering the settings but those are safe averages. We load balance and pool requests so that multiple people watching the same live stream view it through our cloud and only a single live stream between the bridge and our cloud are required. This allows us to get more cameras on the same bandwidth. In addition, full resolution video streams can be be watched live or through our history browser. Our Bandwidth management gets the most out of available bandwidth.

Example 1:

We have a low-bandwidth customer who has successfully deployed 8 HD cameras on our system on a 1.5Mbps connection. We use 0.8Mbps for our low resolution preview video. The customer has the ability to watch a live (or historic) HD video stream of any camera. The preview stream and background upload slow down while the full HD streaming is taking place. Once they finish, the preview stream speeds back up.

Example 2:

We replaced a camera installation at a daycare center. They were providing low resolution video to parents so they could check on their children. Using their previous system, they were only able to support ~20 simultaneous viewers. Using our platform, all 80+ parents can see the preview stream at the same time. The preview stream only uses 0.8Mbps to upload to our cloud and all the parents are served by our cloud.

Technical Explaination:

When talking about bandwidth we segment it into three groups; real-time bandwidth, background bandwidth, and on-demand bandwidth. Real-time bandwidth is what the bridge and cameras use to send and receive metadata and preview images. By default we set it to 50kpbs and recommend adjusting to 100kbps or until image updates are smooth.

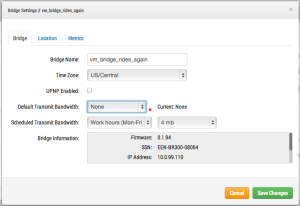

Background bandwidth is how we refer to background video uploading. When video is saved to the bridge it goes into a queue and is scheduled to be uploaded. The metadata travels ahead to the cloud. As bandwidth allows, the background video is sent up and removed from the bridge. The bridge allows for bandwidth limits to be scheduled so as to not interfere with other network traffic.

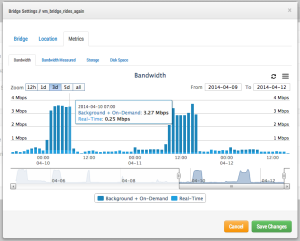

You can see that I have set the bridge to not use any background bandwidth during working hours and use 4mbps during non-work hours. Here is what that bridge metrics look like and they confirm those settings.

On-demand bandwidth is the highest priority and will utilize all the bandwidth of real-time and background settings. When something is requested from the bridge that has not been uploaded yet, it will be immediately pulled from the bridge and sent to the client. This is the case for live video. Our cloud will also transcode the video into other formats depending on the client’s request. Live streaming has a couple seconds latency. Video conversion adds additional latency for live viewing. Our goal is to keep live streaming within 2-3 seconds and live stream with video conversion within 8-10 seconds.

Other posts that might interest you

Cloud Video Surveillance Weekly Summary

Eagle Eye Networks now offers a weekly email to our resellers’ administrators that summarizes the status of accounts managed by that reseller. The Cloud Video Surveillance Weekly Summary is an…

December 6, 2018

Loitering Analytic

Loitering detection monitors a defined area and will trigger a loitering event if an object lingers longer than the configured dwell time. Loitering events can also be set up to…

November 29, 2018

Arlo Flexpower

Have you ever wanted to use battery-operated wireless cameras for professional video surveillance? Sure there are a few wireless cameras, but they require power, which means the camera is still…

September 12, 2018